GenAI, is it fantastic?

Quite a few people have shared their takes on GenAI for development in a very short time, so I realize it’s more than time I post this draft I started over two weeks ago 🙃.

For work, I use AI assistants more and more to help me with my daily tasks. In 2024, it was mainly for automating tedious tasks (scripting stuff, making a painful list of repetitive tasks without coding it properly). Throughout 2025, I tested it several times for Ops work, and each time the results were mediocre, both for designing coherent, reliable and efficient infrastructure and for incident resolution assistance (see my article on the topic).

In 2026, on that last point, I feel we still haven’t progressed, though I’ll admit there are specialized tools I haven’t tested enough yet, open source or not. I can mention k8sgpt and HolmesGPT for open source, and Anyshift with its SRE agent for root cause detection and incident resolution.

Note: fun fact, in his post “My position on Generative Artificial Intelligence” posted just before mine, Alex cites a long list of professions that will benefit from GenAI, and ops folks get nothing more than yet another argument to switch to Kubernetes 😭.

On the other hand, I’ve started using GenAI to build several web projects from scratch (vibe coding?), which I’ve already told you about:

- Converting a blog from Bloggrify to Hugo (french only)

- Creating a “pretty” website (way beyond my skills) to host the research I’ve done on the different ways to deploy Kubernetes

- Improving my website’s security. (french only) by automating modifications to the Hugo theme I use, to get the best score on Mozilla HTTP Observatory

In all 3 cases, the result is there. Or rather, it seems to be for the layman that I am.

I’ve never hidden that I have zero front-end skills, and maybe for a domain expert, what I did with AI is horrible (or not?). In any case, it can’t be worse than the UIs I made without it. Small example 😄:

or rather: GêneAI (sorry that a french play on word I can’t translate)?

This is the kind of feedback you see from people who are actually experts in a domain when they watch novices get excited. We have good examples with AI-generated videos: if you’re not paying attention, you think it’s incredible. But anyone with a slightly critical eye immediately sees BIG consistency problems. Same goes for image generation, or music.

It’s also true for code, and we have great examples of vibe coded projects that are horrific spaghetti messes and cybersecurity sieves. In short, you get it: even though I’m a daily user, I’m not “amazified” (as my kids would say) by LLMs, even the best ones.

Yet, I have several friends who swear by them, including people far more brilliant/intelligent (call it what you will) than me. So I thought “OK, maybe I’m doing it wrong. Let’s try with the best there is”: a “Claude Code”-type IDE and an Opus model.

The editor

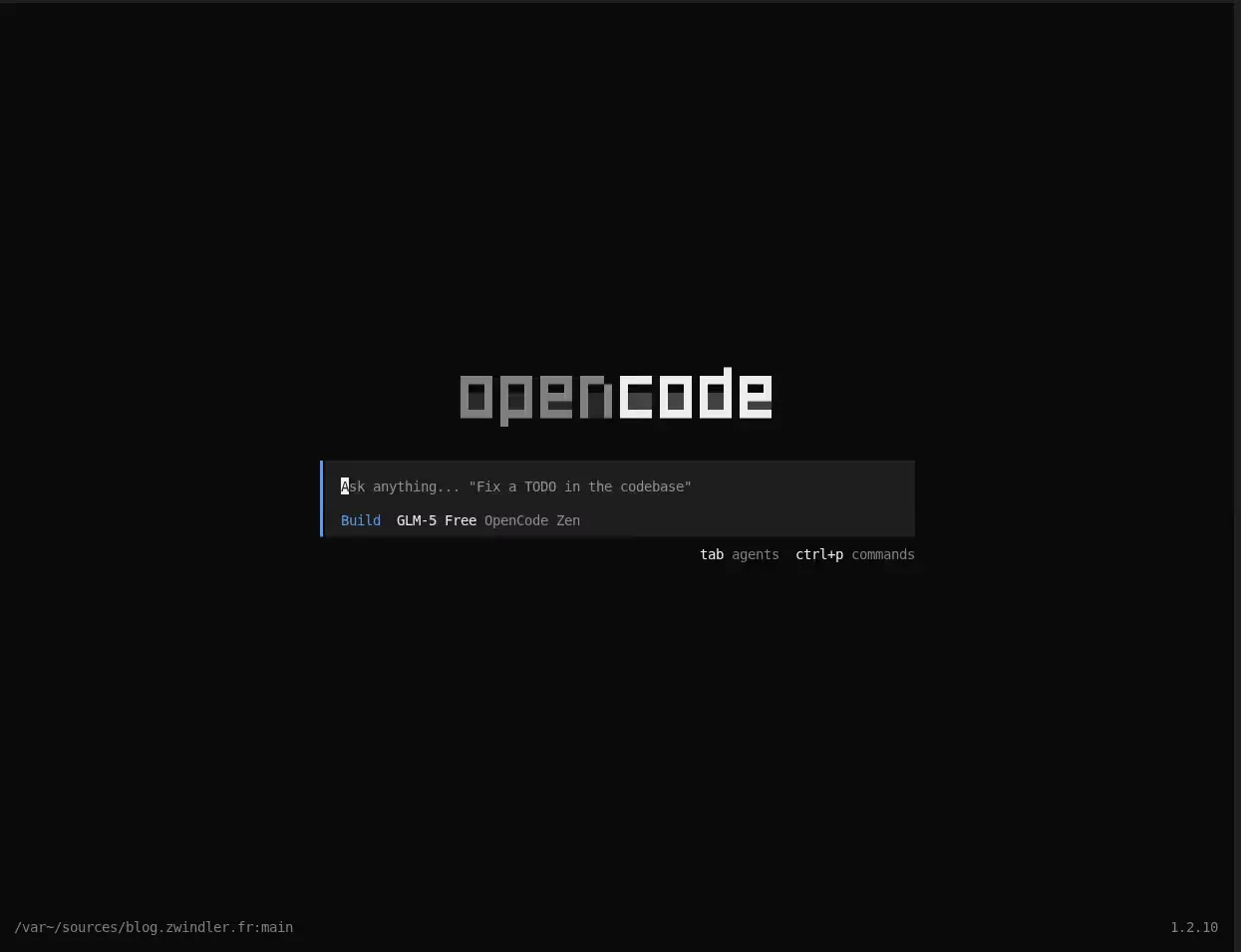

After a quick market survey, you realize the options are plentiful: Claude Code, OpenCode, Amp Code, …

The problem with Claude Code is the entry price. I’m not yet ready to spend 100 or 200€ per month for the use I have today. I don’t know if the 20€ plan would be enough. Beyond the rare personal side projects I mentioned above, I don’t code much. Most of my geeky activities are infrastructure: installing Kubernetes clusters and virtualization OSes. Stuff that’s hard to automate with an LLM.

Pierre (mostly) recommended Amp Code for personal use, mainly because it has a free tier with a certain number of tokens and if you’re not too greedy, it’s quite effective. I preferred to do things my own way and test OpenCode instead, which has the advantage of being more flexible with LLM providers and token consumption. It’s even possible to use local models (free, therefore) or models included for free (at least for now) such as GLM 5 Free, which performs quite well among dev models (most importantly, it’s free…).

OK, we have the editor. Now, the code?

To get a sense of the relevance of code generated by my favorite LLM (Sonnet and Opus, for a while now), I need a language and a use case that I master, so I can tell it “no, that’s absolute nonsense!?”.

A use case I master… as you might guess, there will inevitably be some Kubernetes in there.

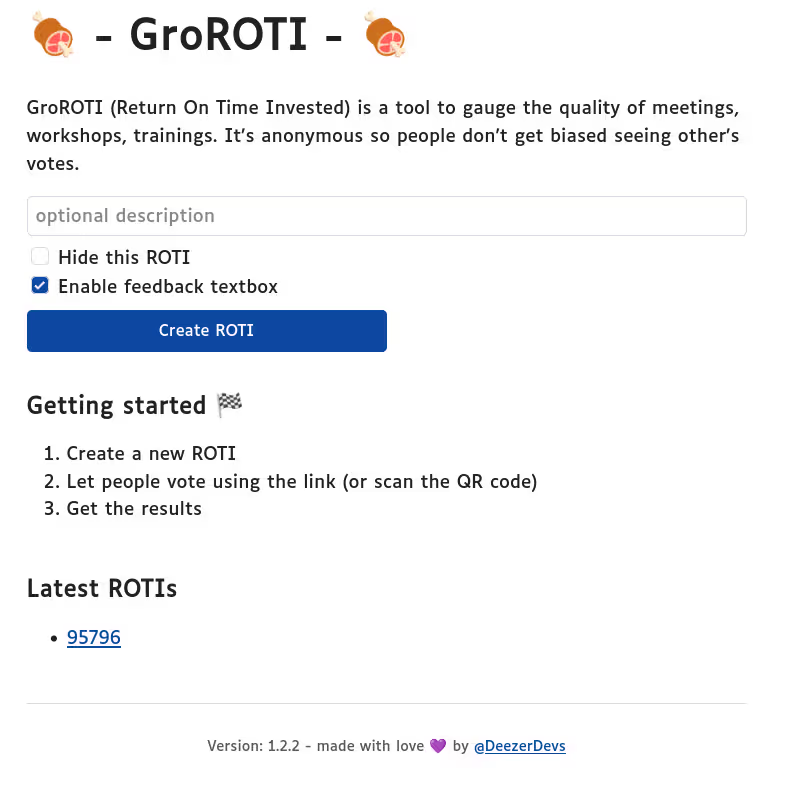

A language I master: I learned Go in 2022 thanks to a colleague at Deezer (thanks again Martial!) and I’ve published a few tools and made minor contributions. I wrote a large part of the GroROTI tool and also started (but never finished) developing an RPG in Go, heavily inspired by Castle of the Winds, a game from my childhood: gocastle. And I have professional-level proficiency (even if it’s not the core of my job) in my day-to-day work.

After the context, the project

OK, we have the technical context: Kube + Go. Now we need the idea to implement. The project needs to be large enough for the test to be meaningful, fun enough that I want to spend personal time on it, but still somewhat useful so I’d want to talk about it and show progress. And above all, a project whose business logic stays within my reach: to honestly evaluate the quality of AI-generated code, I need to be able to read and critique it.

And there, in my overflowing drawer of dumb ideas, I remembered this “pitch” from 2023-2024, which I’d left to rot for lack of time:

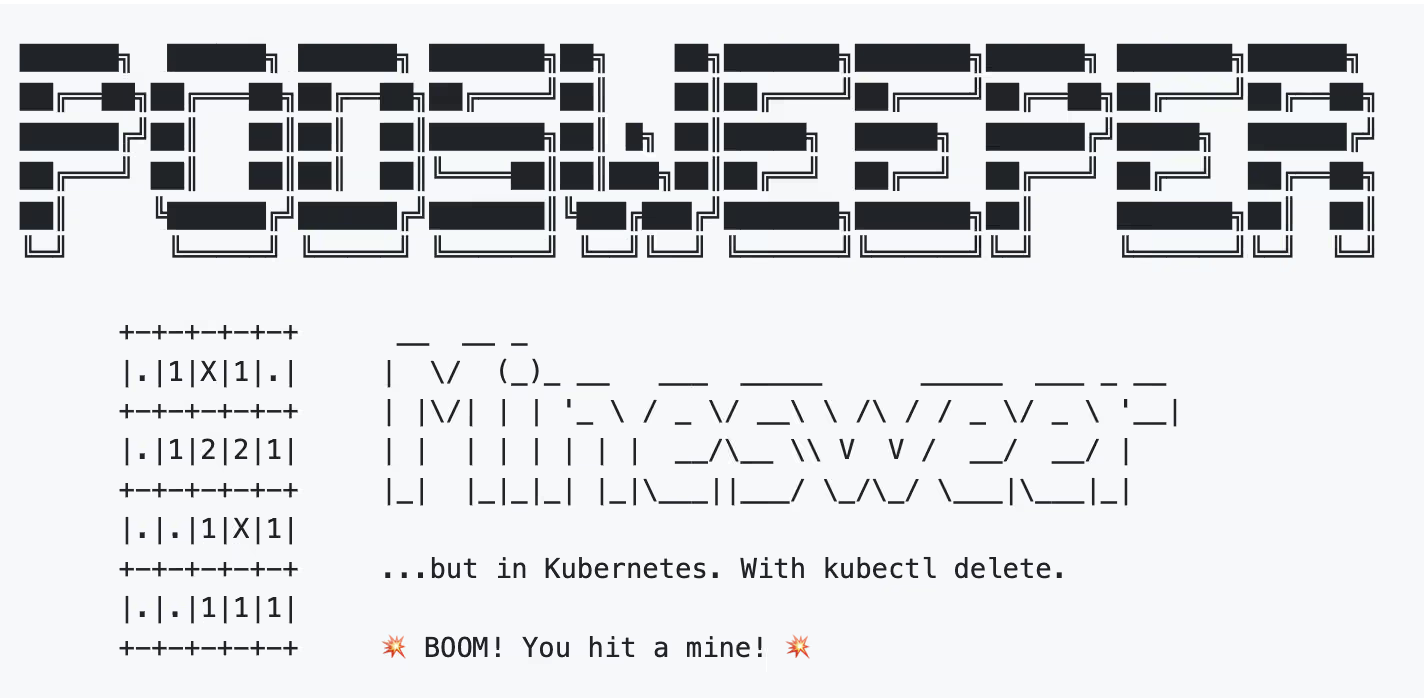

PodSweeper: the most complex, over-engineered and chaotic way to play Minesweeper.

Don’t leave, you’ll see it’s fun. Really!

Instead of a visual grid where you click on cells hoping not to hit a “mine”, we have a virtual “grid” where each cell is a Pod and the click is a kubectl delete.

Yes, it’s dumb. I like it a lot :).

Beyond the deliberate trolling (over-engineering from hell) of this game, there’s actually an educational objective behind it.

Really, there is.

I designed the game as an introduction to Kubernetes security, with difficulty levels to unlock, CTF-style.

At the beginning, you can do everything. You can of course play normally (delete a Pod, see if there are mines nearby) to get the hang of it. You can also automate actions (a script that “clicks a cell” at random, retrieves proximity hints for mines, clicks somewhere safe, …).

But you can also cheat.

And that’s the whole point of the game. In the first difficulty levels, the game’s Kubernetes manifests are deliberately vulnerable. So you can win effortlessly, if you know where to look.

However, levels quickly progress and restrictions come with them. Pretty soon, you reach production-grade best practices that prevent any “cheating”. And if you find unintended vulnerabilities in the game code itself… well, that’s bonus 😈.

The initial process

It’s probably a mistake, but I did the entire ideation phase with Gemini before launching OpenCode. The experience would probably have been better (at least more representative) if I’d started directly with OpenCode.

I dumped everything I had in mind, along with a big shapeless block of dozens of lines from my notes from when I had the idea in 2023, and asked it to produce a SPECIFICATIONS.md file with all the important information, put back in order.

Once the specs were written, I asked it to detail the different levels and the difficulties we would progressively add, in GAMEPLAY.md.

Finally, I asked it to break down the project into “issues” by priority, so I could get an MVP quickly: ISSUES_PRIORITY.md. My idea was to give OpenCode the very detailed, finely sliced battle plan and let it run.

First disappointment: Gemini 3 Pro is pretty bad at this. The tasks were credible(-ish) but ordered randomly (task dependencies not respected). So I launched OpenCode for the first time in my repo and told it “here’s the context, here’s what we came up with for tasks. What do you think?”

The good (open)code…

Among the pretty nice things: OpenCode + Claude Opus immediately saw that the plan created by Gemini was flawed, and asked me questions and proposed a breakdown that was still imperfect but already more coherent. This is probably where the main value of these tools lies. They embed knowledge to guide software development and above all (ABOVE ALL) bring structure.

Pretty quickly, we agree on a plan I like. OpenCode updates the documents and starts developing (scaffolding, creating pipelines, first empty binaries and Docker images). We have a project ready to develop in a snap, which is quite exciting, at first glance.

LLM assistants are very eager to jump into code, this remains true with OpenCode+Opus. Even though we hadn’t finished discussing the plan and possible options, it was spontaneously proposing to move to code. That said, it was the same (or worse) with Gemini (to whom I had specifically said we wouldn’t be writing any code at all).

Very quickly, file creations pile up. I struggle to keep up and at each pause (when the LLM has finished a task and asks if it can move to the next), I spend long minutes reading what the LLM produced. It’s both exhilarating and exhausting (re-reading all that is hard on my rusty brain).

The raw speed is impressive. In 3 sessions of 1 to 2 hours, I have a working MVP. I understand why people regularly talk about 10x engineering when discussing code generation with recent LLMs. If we’re not talking about raw code generation (which doesn’t really make sense), roughly speaking, functional code is generated 3x to 10x faster than what I could have done alone. Features that end to end would have taken me hours to implement (grid generation, reveal logic, game state management) land in minutes. You’ve read it elsewhere, I’m saying the same thing — the bottleneck is no longer the code I type: it’s the code I have to review.

From what I’ve read, the code is correct, particularly in the first iterations, a bit less so after a while. But when I say “correct”, I might be underselling it: the code produced is idiomatic Go. Good package structure, naming conventions respected, error handling in Go style (when it’s there…), appropriate use of interfaces. This isn’t just code that compiles: it’s code a Go reviewer wouldn’t reject outright. We’re aiming for an MVP so the business logic remains very simple, but the foundation is clean.

Everything is tested, very quickly the project has perfect coverage and 100+ tests while we’re not even doing anything yet. If this were human-written code, I wouldn’t know if that’s a good thing. We’re absolutely not doing TDD, a lot of code tests useless things. In the case of LLM-generated code, it’s probably for the best, because the LLM tends to introduce regressions fairly regularly (we’ll come back to that). So it’s interesting here, because:

- it costs almost nothing in dev time (it’s so fast to generate)

- it allows the LLM to realize its code introduced a regression, and fix it on its own, without waiting.

… and the bad (open)code

On the tool and model side first, a few irritants:

- By default, OpenCode doesn’t encourage the LLM to run

golangci-linton every commit. You fix this with a pre-commit hook and/or the famous AGENTS.md / skills, but it SHOULD have been part of the basic kit for a golang project (tests are there…). - The LLM (Claude Opus on OpenCode, but this is a common bias even outside of it) loves software versions that existed at the time of its training. You have to constantly remind it that the versions it suggests are outdated. This is true for everything: Go code, dependencies, GitHub Actions versions.

- You very often hit the 100k token limit. You waste time “compacting” and lose precision. Typically: the LLM asks if it can

git commitchanges, the context compacts before I answer, and it commits without my permission while re-unreeling the context. For code this low-stakes, it’s fine. But in prod, that would be a real problem. - The LLM is often amnesiac: it forgets it has access to a kind cluster for real testing (even though it did it just before the previous compaction, for example).

On the code quality side, it’s more concerning:

- Lax error handling. Some errors that should have returned a FATAL (controller that can’t initialize properly!) are simply logged as ERROR. You end up shipping versions that fail silently. Again, this can probably be fine-tuned with an AGENTS.md.

- Race conditions. I ran into race conditions fairly quickly, pretty silly ones. In my case, pods stuck in terminating impacted the next game (poor graceful shutdown handling). Opus went into a loop without understanding the root cause. I had to stop it and specify that it should never start a new game without making sure the previous one was finished.

- Code generation outside the lines. Sometimes, without warning, the LLM adds a feature that wasn’t requested, is useless, or even contradicts the previously written SPECIFICATION. You have to slap it back into line.

Once put back on track, the LLM rolls again, but these episodes clearly show that for concurrent code or outside of ultra-strict user stories (which goes beyond OpenCode’s default workflow), human supervision remains essential.

People will tell me I’m a beginner with these tools, and I could have avoided some pitfalls by better configuring my environment (AGENTS.md, pre-commit hooks, etc.). That’s true.

But that’s exactly where the shoe pinches: one of the hyped promises of GenAI applied to development is to enable non-developers (or beginners) to produce quality software at high speed, so they can ship complete software. If getting the most out of it requires being an experienced developer who knows how to configure a complex environment (with the specifics of these AI tools), anticipate race conditions and review concurrent code… we haven’t democratized development, we’ve just given another tool to people who already knew how to code. My profile (ops who codes, not a pure dev) was precisely the target audience of this promise. And today, the math doesn’t check out.

Where am I at?

I’ve been talking about PodSweeper for a while now. But where is this project?

After 3 sessions of 1 to 2 hours max, not counting ideation, I have a working MVP. As I said earlier, the productivity gain is undeniable, for someone who isn’t a “developer” by trade.

The code is probably “overall” better quality than the Go code I could have written, but the LLM lets gaping holes through in the business logic (simply because it doesn’t think, while I do. Well, normally). Hard on trust… You really have to remain very wary of everything it produces.

The code is available at:

You can go check out the code to form your own opinion. If you’re not afraid of spoilers, you can read the SPECIFICATION.md (spoilers), or even GAMEPLAY.md (I detail all the levels there so it’s mega spoilers).

You can (normally) play the first difficulty levels. It’s still fairly basic, if you’ve already used kubectl you should find the solution very quickly. My goal is to add levels over time, I’ve already imagined 10, some quite devious 😈 and others might come later.

The code is under MPL v2.0 because it’s a copyleft license I like, since it’s both not very restrictive, OSI compliant and still requires contributing changes back if you make them.

There you go :) if you also find it fun, feel free to test and/or give me feedback.

$ kubectl apply -k https://github.com/zwindler/podsweeper//deploy/base?ref=v0.1.4

namespace/podsweeper-game created

...

$ kubectl wait --for=condition=ready pod -l app.kubernetes.io/name=podsweeper \

-n podsweeper-game --timeout=60s

pod/gamemaster-54f4dddcc4-6m88z condition met

Have fun!